TensorFlowServingTileProcessor¶

Purpose¶

Connects to remote TensorFlow Serving inference server to process voxels tile by tile as fed by ApplyTileProcessorPageWise.

Usage¶

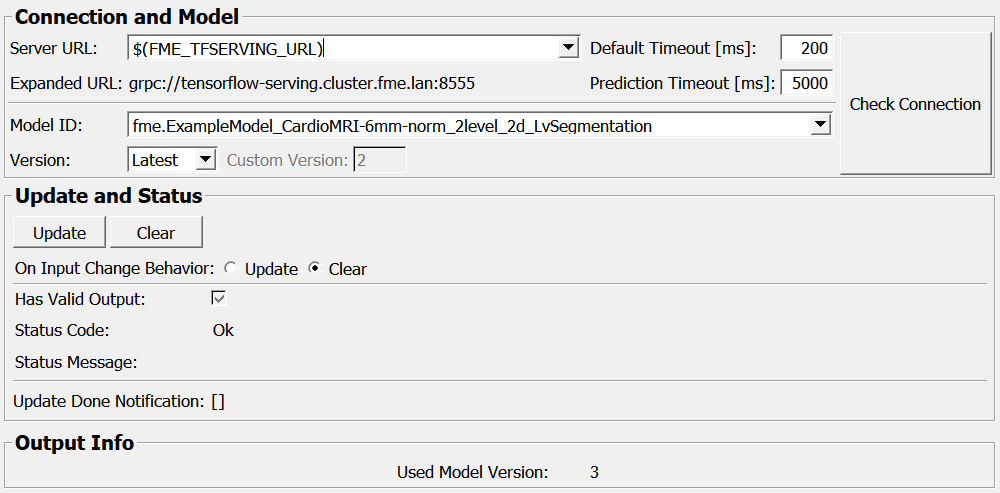

Assuming you have a TensorFlow Serving inference server running, enter its URL into Server URL (e.g. http://localhost:8501), and enter the Model ID you want to use.

You can also check if the connection is possible and the model was found by pressing the Check Connection button, which, if successful, will list all versions available for the current model and all outputs available for the currently selected model version.

Then press Update to set up the processor.

Connect to an ApplyTileProcessorPageWise and make sure to edit the output tile size, padding and dimension mapping. See ApplyTileProcessorPageWise help for more details.

See the example network on how to apply these modules to process images.

Tips¶

If supported by your server and client setup, use GRPC for better performance.

When requesting multiple pages at the same time (e.g. by using an

ImageCacheat the output), inference requests will be issued in parallel to increase performance (depending on your MeVisLab preferences setup for the number of ML processing threads).

Windows¶

Default Panel¶

Output Fields¶

outCppTileProcessor¶

- name: outCppTileProcessor, type: PythonTileProcessorWrapper(MLBase), deprecated name: outCppTileClassifier¶

Provides a C++ TileProcessor wrapping the python object communicating with the TensorFlow Serving backend. Usually to be connected to

ProcessTilesorApplyTileProcessor.

Parameter Fields¶

Field Index¶

|

|

|

|

|

|

|

|

|

|

|

|

|

||

|

|

|

|

|

|

|

|

Visible Fields¶

Server URL¶

- name: inServerUrl, type: String, default: $(FME_TFSERVING_URL), deprecated name: inServer¶

URL the TF serving inference server is to be contacted on (e.g. grpc://localhost:8500 or http://localhost:8501). The prefix (grpc:// or http://) determines the protocol to be used. You can use MDL variables here e.g. ‘$(MY_TFS_SERVER_URL)’, for example defined in the mevislab.prefs.

Expanded URL¶

- name: outExpandedServerUrl, type: String, persistent: no¶

Expanded version of the

Server URL

Default Timeout [ms]¶

- name: inDefaultTimeout_ms, type: Integer, default: 200, minimum: -1¶

Timeout (in milliseconds) for all server communication except tile prediction requests.

Prediction Timeout [ms]¶

- name: inPredictionTimeout_ms, type: Integer, default: 30000, minimum: -1, deprecated name: inTimeout\_ms¶

Timeout (in milliseconds) for a tile prediction request. As prediction can take a long time depending on the used model and inference server performance, this value can be chosen independently from the default communication timeout.

Max. Tries per Batch¶

- name: inNumPredictionTriesPerBatch, type: Integer, default: 3, minimum: 1¶

Maximum number of tries per batch until an error is reported (and processing likely aborted). Trying more than once can be useful if your connection is flaky and occasional timeouts may occur, or if the server’s resources are occasionally exhausted e.g. due to multi-threading pushing many requests at the same time. Note that if the server is unavailable, this will be hopefully found out on update already (not when tiles are requested). Still, increasing the number will prolong the time until you detect that your server has gone down during processing.

Model ID¶

- name: inModelId, type: String, deprecated name: inModelName¶

Model ID to load on the server, including potential namespaces/hierarchies separated by ‘.’ (e.g. fme.MyModel.sagittal).

Version¶

- name: inModelVersionSelectionMode, type: Enum, default: Latest¶

Version selection mode

Values:

Title |

Name |

Description |

|---|---|---|

Latest |

Latest |

Choose latest model (highest version number) available |

Custom |

Custom |

Select custom model version |

Custom Version¶

- name: inCustomModelVersion, type: Integer, default: 1, minimum: 1¶

Custom model version (integer > 0).

Used Model Version¶

- name: outUsedModelVersion, type: Integer, persistent: no¶

Indicates the model version actually used.

Disable batch-level multithreading¶

- name: inDisableBatchLevelMultithreading, type: Bool, default: FALSE¶

Prevent attempting to process multiple batches in parallel, e.g. to save network bandwidth or server memory/compute resources.

If unset, multi-batch inference operations (e.g. using

ProcessTiles) may use up to the number of threads specified in the MeVisLab preferences as “Maximum Threads Used for Image Processing”, which can speed up inference substantially especially for smaller batches. Otherwise, all batches will be processed sequentially.

Update¶

- name: update, type: Trigger¶

Initiates update of all output field values.

Clear¶

- name: clear, type: Trigger¶

Clears all output field values to a clean initial state.

On Input Change Behavior¶

- name: onInputChangeBehavior, type: Enum, default: Clear, deprecated name: shouldAutoUpdate,shouldUpdateAutomatically¶

Declares how the module should react if a value of an input field changes.

Values:

Title |

Name |

Deprecated Name |

|---|---|---|

Update |

Update |

TRUE |

Clear |

Clear |

FALSE |

[]¶

- name: updateDone, type: Trigger, persistent: no¶

Notifies that an update was performed (Check status interface fields to identify success or failure).

Has Valid Output¶

- name: hasValidOutput, type: Bool, persistent: no¶

Indicates validity of output field values (success of computation).

Status Code¶

- name: statusCode, type: Enum, persistent: no¶

Reflects module’s status (successful or failed computations) as one of some predefined enumeration values.

Values:

Title |

Name |

|---|---|

Ok |

Ok |

Invalid input object |

Invalid input object |

Invalid input parameter |

Invalid input parameter |

Internal error |

Internal error |

Status Message¶

- name: statusMessage, type: String, persistent: no¶

Gives additional, detailed information about status code as human-readable message.